Stellar Keys to Exoplanetary Worlds:

Initiative for a DFG Priority Program proposal

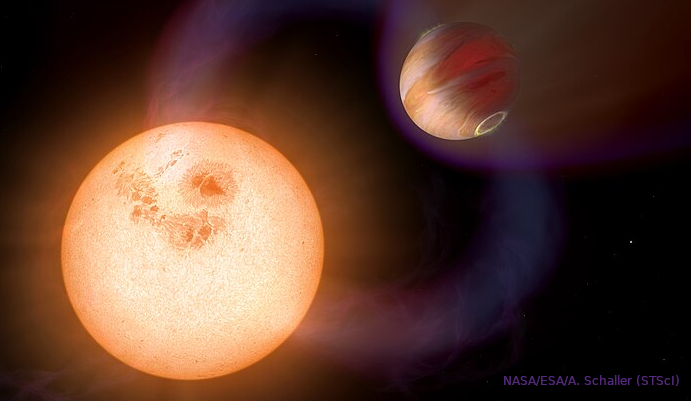

Understanding how exoplanets and their atmospheres evolve is a major goal in German and international research roadmaps. It has become clear that exoplanets cannot be understood without taking into account their stellar environment. In turn, exoplanets themselves can be used as probes for stellar phenomena that would otherwise remain inaccessible. It is time for a research initiative in Germany that combines the existing deep expertise in the physics of cool stars with the pressing questions of exoplanet research.

After a one-year pause by DFG in accepting proposals for Priority Programs (Schwerpunktprogramm, SPP), we plan to submit an SPP proposal to the DFG in October 2026. A programme committee has been established for this effort, consisting of Katja Poppenhäger, Juan Cabrera, Saskia Hekker, Laura Kreidberg, and Ansgar Reiners. The focus of this SPP is envisioned to tackle the stellar-exoplanetary connection, i.e. research questions in which the star is of fundamental importance to understand the exoplanet, or vice versa.

Community Meeting - March 5, 2026

Our virtual community meeting took place on March 5th, 2026, and generated rich and wide-ranging input from the stellar and exoplanetary communities in Germany. The discussion spanned two rounds of moderated breakout sessions and identified key scientific questions for the coming years, as well as areas where close collaboration between the two communities will be essential. We thank all participants for their valuable contributions. This discussion already brought the stellar and exoplanetary parts of the German community closer together!

What's Next

The programme committee is now working on drafting the SPP proposal, drawing directly on the scientific themes and community priorities that emerged from the meeting. Once a draft is available, the community will be invited to provide feedback before submission to DFG in October 2026.